At the start of the last PI Planning Event, we had a quick introduction to a more structured way to score PI Objectives that supported a broader view and supported a platform initiative to make tradeoffs in support of disproportionate value relative to utilization of platform capacity. We also realized a need to improve the clarity of each PI Objective by being more specific and to improve our ability the know that each PI Objective would provide a measurable contribution to a key result that would indicate that the platform was moving toward a strategic theme objective.

The process of providing additional clarity required deeper conversations and alignment on outcome expectations. The focus on being able to measure contribution reinforced the desire to focus on high-value, high-priority that is aligned with the strategic direction of the platform.

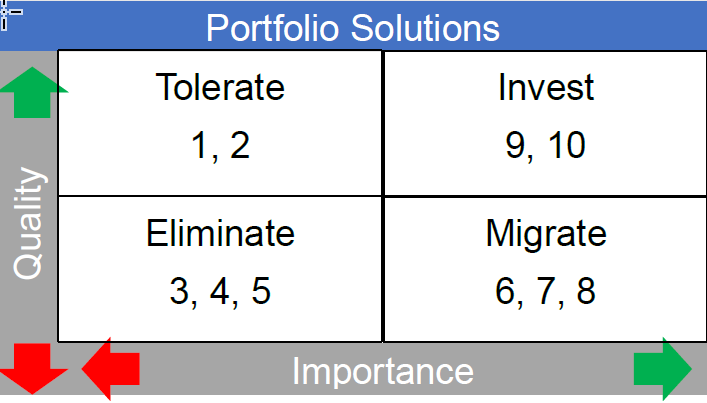

The structured scoring approach introduced a two-dimensional model for deciding on a PI Objective score. The first dimension referred to as T.I.M.E considers the strategic importance and operational quality of the application / solution / business capability that would be affected by the work to deliver on a PI Objective.

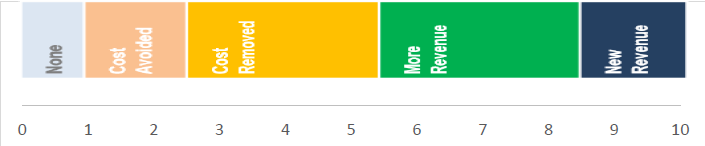

The second dimension of IMPACT indexes the expected economic benefit of the outcome of delivering on a PI Objective.

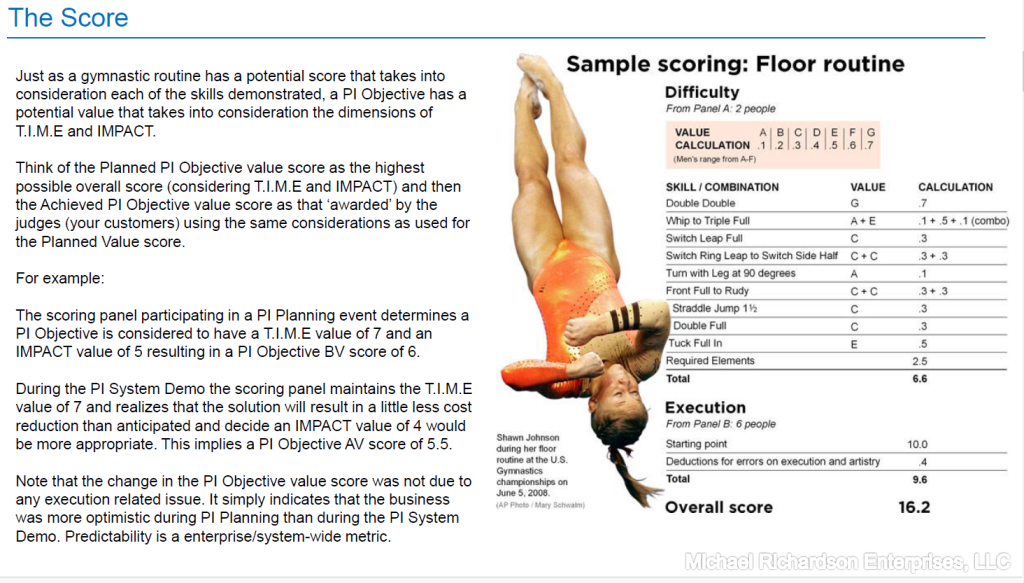

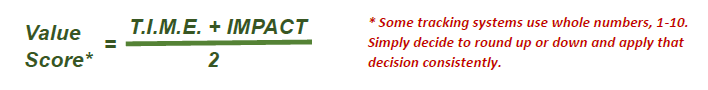

The PI Objective planned value score is the sum of the two-dimensional indices divided by two.

During the Program Increment teams should refer to the PI Objective planned value score as an indicator of where they should be focusing their capacity. This aligns with using T.I.M.E. and IMPACT as a more structured way to score PI Objectives to make tradeoffs in support of disproportionate value relative to utilization of platform capacity.

During the Program Increment demonstrations, the context should be set to that of the PI Objectives. The teams worked hard with the business and product owners to write PI Objectives that were specific and measurable. With the clarity of what was expected and how the impact would be measured the demonstration feedback can be more focused and is a significant consideration in determining the PI Objective actual value score..

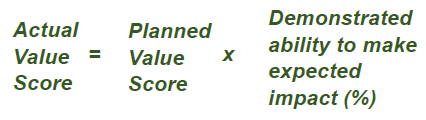

Setting the PI Objective actual value score does not require revisiting the T.I.M.E and IMPACT. It does, however, require the same panel of people who set the PI Objective planned value score. The same panel of people along with having written the PI Objectives to be specific and measurable improves the recall of considerations made up to 3 months earlier when setting the PI Objective actual value.

When all goes well the demonstrated ability to make the expected impact is 100%. There is no need to be exact. If less than 100% then lower the PI Objective actual value by 1 point, or more if that better expresses the judgement of the panel. Likewise, if the demonstration shows the potential to get more than 100% of the expected impact the raise the PI Objective actual value by 1 point, or more if that better expresses the judgement of the panel.

Ultimately, the Predictability Measure should be used as a continuous improvement mechanism. The Predictability Measure is not a delivery performance metric. It is an indicator for the ability of ‘the business’ / system to stand up and score the value of an outcome (as expressed by a PI Objective) before it is built, the predictability measure indicates the collective ability to understand the customer, the customer’s pains, and the possible gains, as well as the ability of the people who are implementing the solution to understand both.